Sliding Across the Database Divide with Proactive Chat Help

In Brief

Proactive chat help has gained attention in academic libraries for increasing the number of questions from online users. Librarians have reported a significant increase in chat traffic, particularly related to research. So far, library websites have been the primary target of proactive chat implementation efforts, leaving subscription databases largely untouched and their users without help. This article describes how librarians at the University of New Mexico collaborated with vendors to embed proactive chat in databases, detailing the process, technical glitches, and use trends. With this project, librarians have pushed the boundary of chat help into territory rich with opportunity to directly support online scholars at their point of need. Insights outlined here would be useful to librarians considering expanding their chat reference service to subscription databases and those interested in connecting help services to online users.

Introduction

Across the United States, reference librarians use instant messaging tools to connect synchronously with researchers, and chat service is a staple across many academic institutions. At the University of New Mexico, a Research 1, Hispanic-Serving Institution, reference librarians serve a community of 20,244 FTE (Office of the Vice President for Research, n.d.). At the University Libraries (“the Library”), the reference landscape has changed in step with national trends of the last decade, particularly as face-to-face interactions have declined and online chat interactions have increased. Since the Library’s adoption in 2008, chat reference has grown to become an integral component of the suite of reference services offered (Aguilar et al., 2011). Staff provide in-house chat help to users who initiate conversations from chat boxes sprinkled across library web pages. Until recently, all chat boxes and links have been stationary, user-initiated, and placed within the web content management software accessible to and maintained by Library employees.

In 2016, the Library migrated to Springshare’s LibAnswers software platform, which offered three styles of chat boxes, each with customization options. (Springshare has since created a fourth style, the floating widget.) Two of the style options provided the ability to make the box actively solicit a chat interaction with a user, whether by sliding out from a tab at the side or by popping up into the middle of the screen. These newly available features represented an increasingly interesting prospect.

The software also offered improved transcript access. In reviewing logged chat conversations, the reference team observed many interactions where users needed help finding appropriate research databases, or had recently been in a database and could not find the material they needed. As the reference team discussed this trend, we realized that even though librarians and professors advised students to use databases for their research, there was no librarian help embedded in those same databases. If students realized a need for live, online librarian assistance, and knew that chat help was available somewhere, they had two options. One route was to click the back arrow to find a library page with a chat widget, potentially losing their place in the database. The second choice was to open a new page or tab, navigate to the library website, and locate a chat widget.

We decided that in addition to placing chat widgets into databases, we would make them proactive. We hoped that the proactivity of the widget would signal to confident database users that librarian chat had newly arrived in those platforms, while also actively offering help to inexperienced users. We ruled out passive chat boxes because they required users to be aware of their need for help before initiating conversations. Alternately, the proactivity of the widget could help users assess whether they needed librarian support.

With a clear intention to expand chat service to databases, we added ourselves to the ranks of other academic institutions who adopted this technology.

Proactive Chat Efforts in Academic Libraries

Chat service in many academic libraries is now a standard offering, and the development of the option to make chat boxes automatically offer librarian help has created buzz. Since the first documented implementation of proactive chat in an academic library in 2014, several libraries have implemented proactive chat service and written about their experiences (Epstein 2018; Fan, Fought & Gahn 2017; Hockey 2016; Kemp, Ellis & Maloney 2015; Pyburn 2019; Rich & Lux 2018; Zhang & Mayer 2014). While most have focused on placing proactive chat widgets in library website pages, some institutions have also placed them in discovery tool results pages. Two articles documented interest in extending proactive chat to database platforms (Rich & Lux 2018; and Epstein 2018), and two describe successful deployment of proactive chat within databases (Hockey 2016; Pyburn 2019).

Several articles discuss increased chat transactions following the implementation of proactive chat (Zhang & Mayer 2014; Kemp, Ellis, & Maloney 2015; Epstein 2018; Rich & Lux 2018;). In their webinar, “Transforming Online Reference with a Proactive Chat System,” Jan Kemp and Marjorie Warner reported that their venture was so successful that their institutions hired additional staff to accommodate the increase in chat volume (2016). In several cases, proactive chat mitigated decreases in reference traffic and even catalyzed expanded reference service.

Perceptions of Proactivity

Perceptions of the proactive nature have varied, with users and librarians expressing a wide range of opinions. In the first documented exploration of proactive chat in 2011, academic librarians conducted interviews with users to determine how a proactive, pop-up chat box on their library website might be regarded. When asked whether they found the offer to be positive or negative, four out of the six interviewees thought the pop-up was intrusive, perhaps because the chat box only initiated as a response to catalog searches that returned zero results. The authors hypothesized, “The negative feelings seemed to stem from the users’ association of a pop-up window with bad experiences on the Internet with pop-up web advertisements and electronic viruses” (Mu et al. 2011, 127). They recognized that users’ broader Internet experiences provided context for their perceptions of proactive chat. The negative perception affected some librarians, as well. One dismissed proactivity in chat as an “undesirable feature,” and concluded “…in most cases – and maybe you can relate – proactive chat tends to be annoying or distracting” (Schofield 2016, 32).

In several instances, however, the potential benefits of proactive chat outweighed negative associations, and experimentation with this new twist on an existing service continued. Nuanced approaches intended to mitigate potential negative connotations revolved around placement and wording. Two reports documented the targeting of strategic pages in which to place proactive chat, rather than a blanket treatment (Kemp, Ellis & Maloney 2015; Hockey 2016). Librarians at Bowling Green State University examined locations within the pages themselves, and chose to put the chat widget in the unobtrusive lower right corner. They also used welcoming language intended to put users at ease (Rich & Lux 2018).

Changes in users’ perceptions since 2011 have been documented with positive feedback. Kemp, Ellis, and Maloney analyzed user comments in their library’s 2015 LibQUAL survey, and found that only five out of 38 comments mentioned chat proactivity in a negative light (2015, 768). Rich and Lux did not receive any negative comments from students on their proactive chat efforts, though their colleagues did lodge complaints (2018). Librarians at Penn State University conducted a usability study on proactive chat and found that users would welcome a pop-up chat widget on the library website, and described the widget as “easy to use,” “approachable,” and “useful” (Imler, Garcia, & Clements 2016, 287). In this study 93% of the participants said the proactive chat widget on EBSCO’s PsycINFO database search page was helpful. Only one user out of 30 associated the pop-up chat widget with negative terms (2016, 287). These positive experiences with proactive chat may be informed by the rapidly evolving web landscape. Fewer involuntary pop-up advertisements invade browsing sessions, and the proliferation of instant and social messaging platforms are more integrated into users’ Internet experiences.

Proactive Chat Implementation at the Library

Laying the Foundation

After viewing Kemp and Warner’s webinar at the Library, we garnered enough institutional buy-in to put together a small project team to explore placing proactive chat in subscription databases. As exciting as the prospect of duplicating other libraries’ success was, the project team knew that the existing chat service model would not be able to accommodate the marked increase in chat volume reported elsewhere. We did not want to overburden staff providing face-to-face help by asking them to field potentially concurrent chat sessions. Relocating staff to offices to answer chat inquiries was not an option either, as staff members at the reference desk were also expected to supervise student employees and maintain building safety and security. Budgetary constraints ruled out the possibility of hiring additional staff to handle potentially large increases in chat volume.

In addition to chat volume, other unknowns existed. The project team needed to verify proof of concept. While some database vendors allowed links to the Ask A Librarian help page, the project team was unaware of any vendor allowing proactive chat widgets to be embedded in their platforms.

With a modest project scope in mind, the project team decided to target four large database vendors: ProQuest, Clarivate Analytics, Gale Cengage, and EBSCO. The vendors’ responses to our exploratory requests for collaboration varied. ProQuest responded that the company could not accommodate our request, citing an existing option of a stationary chat link placed at the bottom of the database search page. Clarivate Analytics deferred because the company was in the midst of changing ownership and invited possible future collaboration. Two vendors, Gale Cengage and EBSCO, welcomed the venture and referred our inquiry to their in-house tech teams.

Building the Widget

With two vendors on board, the project team needed to build the chat widget. We began by choosing the slide-out widget style, preferring its ability to remain visually present at the side of the screen as the user navigated. Conversations could exist alongside database searching.

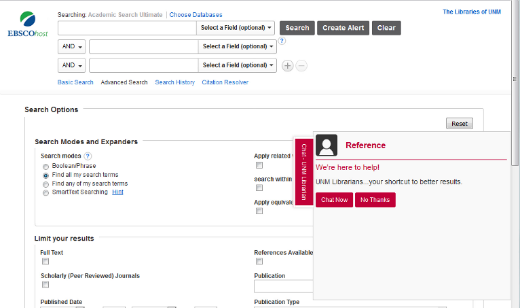

Next, we customized the widget’s behavior and wording. After discussion and beta testing, the team chose a 30-second interval for proactive behavior. This meant that the user would encounter the widget if they navigated to a subscription database page and lingered there for 30 seconds. At that point, the tab on the right side of the screen would slide to the left 3 inches, revealing a box that said “We’re here to help! UNM Librarians… your shortcut to better results.” With this language, we wanted to welcome the user and also signal that a librarian could be summoned if they chose to chat. We did not want the user to feel as though they were automatically thrust into a human interaction as soon as the box deployed, signified by a first-person direct question such as, “Hi! Can I help you find an article?” We thought it could be less intrusive if the user knew they would not be rejecting a person if they chose not to chat. Instead they would just be dismissing a tech object. After the welcome message, the user would have the option to choose “Chat now” or “No thanks” (Figure 1). If the user clicked “Chat now,” a free text field appeared for the user to type an initial question. After the user submitted their question, the LibChat software notified reference staff of an incoming chat inquiry.

After deciding the interval and language, we customized the color palette. We chose to incorporate the cherry red color of university branding to further reinforce librarian help, visually differentiating it from the EBSCO and Gale color branding and their customer service options. The presence of the cherry red chat tab across the two vendors’ sites further distinguished the service’s brand.

Figure 1. Proactive, slide-out widget in EBSCO’s Academic Search Ultimate database.

This screenshot shows the slide-out chat widget deployed and extended and hovering above the search homepage of the EBSCOhost database Academic Search Premier. The chat widget is accented with red and gray, and contrasts with the blue, green, and white lettering of the database’s palette.”.

Testing

Once the team built the widget, we tested its functionality by placing it in live websites. Because we did not have access to editing sandboxes in Gale or EBSCO platforms, we created a series of private LibGuides to test the widget’s behavior and were pleased with how a chat conversation started in one guide was auto-populated into the widget on another guide. We were confident this behavior would be replicated in the databases, and users would be able to navigate from page to page without losing the chat conversation.

Because we were not able to test the widget inside the databases before going live, the project team decided to implement proactive chat the second-to-last week of the spring 2017 semester. This had traditionally been a slow period in the Library’s chat reference load, so staff would not be overwhelmed while also allowing a modest amount of users to encounter the widget. Additionally, the project team could use the slow summer period to resolve possible technical issues before the busy fall semester. Vendor tech teams agreed with the proposed timetable and placed the chat widget in each of their databases, including the introductory search page, the results page, and the item detail page on May 3, 2017. ((While a thorough investigation about the intersection of patron privacy with the data collection practices of chat and database software vendors is outside the scope of this article, it is worth noting that increased access to users’ information by the database vendors seems unlikely. The EBSCO and Gale Cengage tech teams received a snippet of widget code to embed in their pages, not the access key to the Library’s Springshare LibAnswers instance. Any additional patron data collected from in-database chat sessions would likely reside with Springshare, rather than EBSCO and Gale Cengage. LibAnswers records the referring URL that could include search terms entered by patrons using databases, such as in this string:

https://web-b-ebscohost-com.libproxy.unm.edu/ehost/resultsadvanced?vid=17&sid=790eefd2-4a20-4947-a0fc-ffba4598916a%40pdc-v-sessmgr05&bquery=(experimental+AND+study+AND+design)&bdata=JmRiPXBzeWgmY2xpMD1GVCZjbHYwPVkmY2xpMT1SViZjbHYxPV

These search terms could be linked to personal information, such as user name, email or IP address.

Springshare’s privacy policy does not list chat interactions and the associated referring URL as “Customer Data,” however it may be argued that the category Customer Data also includes search terms. Search terms are much less sensitive than other types of data they collect from chat widgets regardless of database involvement. Springshare posts their stance on patron privacy via their privacy policy and statement of compliance with the General Data Protection Regulation law. These documents state the company’s refusal to share or sell customers’ data. While the risk of personal data exposure through in-database chat sessions is low, experts in software interface communication may be able to more conclusively state the level of risk. Privacy audits taking place in many academic libraries in the United States could include further investigation of these intersections.))

Technical Hitches

Reports of technical difficulties with proactive chat at other institutions have revolved around software code incompatibility. One institution could not insert the chat widget onto its homepage because of conflicting features in the content management software of the library site (Fan, Fought & Gahn 2017). Attempts at placing the widget into EBSCO and ProQuest databases returned errors for database users at another institution, although eventually they were smoothed out in the ProQuest platform (Pyburn 2019). Another report discussed the inability of the widget to remember when a user had clicked, “No thanks,” an experience we would later have with our chat widget (Epstein 2018). Each of these articles had yet to be written when we decided to implement proactive chat at the Library, however, so we went into the process not knowing what could go wrong.

Thanks but No Thanks

Three logistical issues came up during the course of the project, and the team discovered the first problem a few weeks after going live with proactive chat. During some browsing sessions, the chat box refused to take the hint and remain stationary after the user clicked “No thanks.” This issue did not crop up during the project team’s beta testing, perhaps because the team tested the widgets on LibGuides, another Springshare application. In the live database environment, however, sometimes a single user would be met with multiple chat requests.

We tried to duplicate the problem on staff and library classroom computers, on different browsers, and off-campus. Eventually, the team realized that browser cookie settings could interfere with the chat software’s ability to track the “No thanks” click history. Because users controlled their browser, we needed an easy way to explain the issue and offer instructions to both users and reference staff fielding chats. We created a Frequently Asked Questions entry that explained the need to allow third-party cookies on users’ browsers with links to directions to adjust the settings in major browsers. For some users, this worked. But cookie settings only accounted for a portion of the disregarding behavior, however, and the issue persisted. The project team continued to troubleshoot but could not identify a clear set of circumstances in which the chat box would ignore users’ preference. Because the team could not consistently reproduce the pattern and did not have access to database software code, further troubleshooting by the project team was not possible.

In some cases, this technical annoyance interrupted library instruction sessions, causing librarians to have to explain it to a classroom of students. For some colleagues, the interruption offered a chance to promote the availability of the service and it only offered disruption for others. Despite the occasional unpredictable widget behavior, the project team determined that the issue did not happen frequently enough or was not a significant user deterrent to warrant complete cessation of the proactive chat service.

Non-transferrable Conversation

Back in the widget formation stage, the project team chose the slide-out widget style in part because the chat conversation embedded in the originating page followed the user as they navigated across pages. This behavior enabled the user to maintain a stable connection to the chat agent and allowed the conversation to partially overlay the active page. However, in September 2017, several months after going live, the behavior of the chat boxes changed, so that chat conversations in progress did not automatically populate onto new pages. When users discovered that the new webpage did not contain their in-progress chat, they were left to search for the conversation or start another. Some users navigated backward to the page in which the chat began. If it took longer than 30 seconds for the user to find the correct page and add another comment to the chat, the software treated the conversation as dormant and ended the chat. When this happened, the user would find that the text box no longer accepted messages and instead displayed the end-of-chat survey or a fresh chat box. On the librarian’s side, the chat box turned red and no further comments could be added.

This unanticipated behavior change caused a great deal of confusion for users, and left staff scrambling to make sense of what was happening. As with the “No thanks” issue, impromptu troubleshooting sessions with users took place when they alerted reference staff to the issue. Reference staff had to juggle problem-solving efforts while also assisting users with their information needs. The remote location of chat users further complicated problem solving efforts because staff could not see which steps resulted in the disconnects for the user. Several days of increased disconnections and frantic reconnections during a busy time of the semester occurred before we understood that the behavior of the widget had changed.

Even though we now knew what the problem was, there was little the Library could do to solve it. Troubleshooting sessions were difficult, since we could not view or edit proprietary chat or database software code. Recreating user click-history and device behavior was impossible. We contacted the vendors, however triangulation with the software companies and the Library created an indistinct responsibility zone that proved difficult to navigate. Resolution efforts over several months were ultimately ineffective. The project team decided to change the widget to the pop-up style, which allowed the chat conversation to take place in a new browser window and remain unaffected by user navigation in the other windows.

Although an improvement in sustaining conversations, this widget style required users to toggle between windows each time they wanted to add a new comment to the conversation. Additionally, the user was not notified with a visual or auditory cue when the librarian added a comment, so users sometimes missed comments. We requested that Springshare adjust the chat code to add a visual ping of the minimized window so users could see the librarians were talking, however this did not happen.

Vendor Control

While the Library appreciated Gale Cengage and EBSCO’s willingness to work with us, these collaborations made us even more dependent on them. There was no formal documentation of this collaborative agreement, and the continuation of proactive chat service was dependent on the vendors. In February 2018, we discovered that our chat widgets no longer appeared in the Gale Cengage suite of databases. After asking our vendor contact, we learned that the company’s refreshed platform no longer supported our widget and subsequent requests for reinstatement were unsuccessful. The removal of proactive chat boxes from Gale Cengage databases reduced by half the number of databases in which the proactive chat widget appeared. EBSCO continued to support proactive chat boxes in their databases.

An Idea to Address the Hitches

By March 2018, proactive chat had been in the databases for almost a year, and the technical issues had become apparent. We wanted to resolve each of the hitches: the disregard of the user’s choice of “No thanks,” the non-transferability of the conversations across web pages, and the susceptibility of service elimination by vendors. We formed a larger team of Library colleagues to evaluate options, including the library information technology program analysts, web librarian, and electronic resources expert. One person suggested that we try placing proactive chat boxes on the proxy server, the interface that authenticates users’ credentials prior to subscription database access. If successful, this adaptation could give the Library more autonomy in that we could overlay any or all of the subscription database platforms and would not have to rely on each vendor for initial implementation and sustained collaboration. With supplemental assistance from the Springshare tech team, and a newly available ProQuest sandbox for testing, preliminary gains gave us hope. We added custom code to the proxy server content management software, placing an additional timer that would begin after the user clicked “No thanks,” and override subsequent offers to chat within a half hour timeframe.

This seemed to cut down on the number of offers to chat, but did not resolve the issue completely. The original behavioral inconsistencies arose with this proxy layer solution, especially when we tested moving from one ProQuest database to another. The persistence of behavior issues seemed to confirm that the problem lay with the interaction of the chat widget code and the database code. Despite initial optimism, ultimately the team decided that without access to the proprietary code, the Library’s creative work-arounds would not adequately reduce the original technical issues, and shelved further development of this idea.

Gauging Usage

We knew the widgets were not behaving as expected, but it was not clear whether these hitches were affecting use. To understand usage, we analyzed transaction data recorded by the LibAnswers software. Although it collected robust metadata, the software did not track whether the chat was started by the user after the proactive widget slid out on its own, or as a result of the user clicking the stationary chat tab before the 30-second proactivity trigger. Because this was unknown, we could not draw direct conclusions about user and chat box interaction resulting from widget proactivity. The following data sets use the term “in-database proactive” to describe the originating widget, not the stage of widget activity when the user interacted with it.

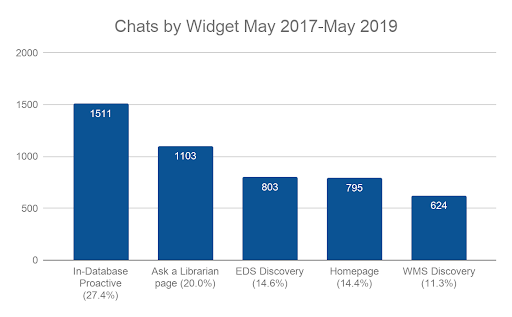

Use by Widget

The Library implemented live, proactive chat on May 3, 2017, and as of this writing (May 24, 2019) has received a total of 5,503 chats across all UL widgets. Of that total, 27.4% (n=1,511) originated in the proactive database chat widget, making it the top producing chat widget. This number may be inflated due to patrons attempting to reconnect after an interrupted interaction as described previously, and additional research is needed to understand the exact number of disconnected and restarted interactions. Despite this possible inflation, that some users were willing to restart a chat conversation indicated their interest in using the service. Whether they responded to the proactive offer or clicked on the stationary tab to initiate an interaction, users chose to chat when they were in the databases, and did so at a relatively high rate (Figure 2). Users have likely encountered the technical issues, yet their usage of the in-database chat widget suggests that the issues have not significantly deterred them.

Figure 2. Top five performing chat widgets since proactive chat implementation..

This chart shows the number of chats fielded over a 2 year period. Full description of Figure 2 as a list.

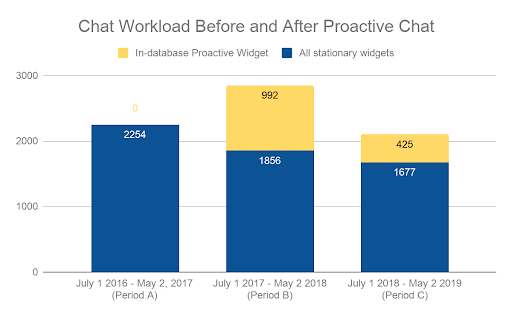

Workload

Initially, we limited the project scope to avoid overwhelming reference capacity and needed to determine if that decision was supported. To gauge overall impact of the additional proactive chats in relation to existing chat traffic, it was valuable to compare the number of chat transactions that took place in subscription databases with all other chat transactions. The Library migrated to LibAnswers LibChat software in July 2016. Ten months later, in May 2017, proactive chat boxes in databases became available to patrons. From July 1, 2016, to May 2, 2017, the period before proactive chat was implemented in the databases, there were a total of 2,254 chat interactions. From July 1, 2017, to May 2, 2018, after proactive chat was introduced, the number of chat interactions jumped to 2,848, an increase of 26.4%. In the last period measured, Period C, chat transactions in both stationary and proactive widgets decreased from Period B. This total is reduced from Period A, before proactive chat was introduced (Figure 3).

Figure 3. Chat transactions originating from stationary widgets and in-database proactive widget..

This chart shows that after the infusion of proactive chat transactions in Period B, the number declined in Period C to below Period A levels. Full description of Figure 3 as a list.

The introduction of proactive chat into the databases initially increased the overall chat load. Over time, however, all chat interactions fell, and initial gains provided by the introduction of proactive chat have been subsumed in the decline. In-database proactive chat load has not overwhelmed agents and the initial project scope was deemed appropriate.

In-database proactive chat from Period B to C fell by 57%, and several factors may have contributed to this reduction. During fall 2017, approximately 20% of in-database proactive chat transactions originated from the Gale Cengage databases, and the elimination of the widget from that vendor’s 50 databases at the end of 2017 could account for part of the decrease seen in Period C. Also in fall 2017, the slide-out widget behavior changed, preventing some chat conversations from following the user. Because some users chose to start new chats to regain connections with reference staff, and the system records each conversation as a unique instance, some interactions were likely logged twice. Although it is impossible to know the exact number of disconnects due to widget behavior failure, review of chat transcripts suggests this occurrence was more common in Period B. We changed the slide-out style to the pop-up style in Period C, so it is possible this change reduced the disconnects, thereby reducing users’ need to restart chats. Additional analyses will be possible as more data is collected in the coming semesters.

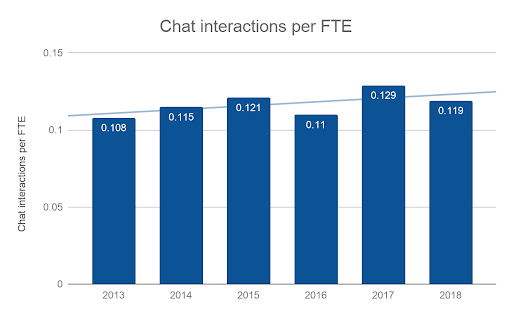

Several factors may be contributing to the overall decline of chat interactions at the Library. When taken as a whole, reference transactions via all modes (face-to-face, chat, email, SMS text, and phone) have decreased when measured from July 1, 2016 to May 2, 2019. When parsed by Period, chat, email, and SMS text follow the same pattern, peaking in Period B. The number of views of the Frequently Asked Questions have risen each year, perhaps answering questions otherwise fielded by staff. Library instruction initiatives, both face-to-face and online, may also impact the number of questions asked by chat or other reference mode. Another possible reason for decreased library reference transactions is that instructors may be accepting a wider range of resources found outside the Library’s online resources. Although enrollment at UNM has declined each year since 2014, the number of chat interactions per full-time equivalent has increased slightly (Office of Institutional Analytics, n.d.). 2017 saw the highest chat rate per student in recent years, a number perhaps influenced by the additional chats taking place within the databases (Figure 4).

Figure 4: Chat interactions per Full Time Equivalent (FTE) student enrollment metric..

The trendline shows a slight increase in the number of chats per student since 2013. Full description of Figure 4 as a list.

User Ratings from Post-chat Surveys

Usage statistics were helpful to understand how users were interacting with the in-database proactive widget, and we wanted to investigate users’ views of the quality of interaction that took place within it. We looked at data collected via the post-chat survey, which included a 1 to 4 rating scale: 1 was bad, 2 was so-so, 3 was good, and 4 was excellent. Users could rate any part of the chat interaction, including but not limited to the proactivity or non-proactivity of the chat box.

The number of ratings the top five most-used widgets received followed the pattern of overall usage. The in-database proactive chat widget was the most used, and also received the most ratings (n=238), and had an average rating of 3.71 (Figure 5). The second-highest used chat box (Ask A Librarian) received the second-highest number of ratings (n=224), and had a slightly increased rating average of 3.77. The widget with the highest average rating was the WMS discovery chat box (n=3.83).

Although the average rating for the In-Database Proactive widget was 3.71, which tied for last place, it was 0.12 behind the first place widget. Users rated chats occurring in the In-Database “Excellent” 79% of the time. Additionally, chat conversations rated within all of the widgets are well above a “Good” (3) rating. This consistent rating average suggests users did not associate the in-database proactive chat technical issues to negative chat ratings.

| Average Rating | Number of Ratings | % Excellent | % Good | % So-so | % Bad | |

|---|---|---|---|---|---|---|

| WMS discovery | 3.83 | 87 | 91 | 2 | 6 | 1 |

| Ask a Librarian page | 3.77 | 224 | 85 | 10 | 2 | 3 |

| EBSCO Discovery Service | 3.73 | 195 | 82 | 11 | 4 | 3 |

| In-Database Proactive | 3.71 | 238 | 79 | 15 | 4 | 2 |

| Homepage | 3.71 | 164 | 78 | 18 | 2 | 2 |

The top five most-used widgets had comparable average ratings, commonly receiving the “Excellent” rating.

Future Directions

Although we encountered technical hitches with this project, the project team counted this endeavor a success. Over a quarter of Library chat conversations came from inside database platforms, spaces previously devoid of librarian help. Users rated their interactions as overwhelmingly excellent. As we continue to assess this project, we would like to explore expanding proactive chat to more databases and the discovery tool, and reach out to previously unavailable vendors. We have plans to conduct usability tests with in-database proactive chat users to further investigate widget behavior and users’ perceptions. A deep dive into the types of questions arising within the proactive chat widget is in development.

Additional opportunities to research proactive chat exist, especially in the realm of accessibility. Patrons who use screen readers and users who access proactive chat on their mobile devices may encounter technical glitches beyond those outlined here. Licensing negotiation is another avenue of potential interest, and affirmations for chat widget support could provide clarity in vendor collaboration.

As libraries across the United States work to reach users at their point of need through proactive chat help, momentum builds for deeper collaborations. Librarians, content providers, and library software companies may be able to make this highly-used service seamless, and bridge the database divide.

Acknowledgements

I would like to thank Brett Nafziger for his influential work on this project during its formative stages. David Hurley has offered immense support for the project and throughout the writing process. Inga Barnello, Glenn Koelling, and Jeremiah Paschke-Wood provided thoughtful comments on an early bird draft, transforming this study from nestling to fledgling. Article reviewers Paula Dempsey and Ryan Randall offered keen insights with kind words. Thank you. Publishing editor Ian Beilin shepherded this piece through the publication process. Finally, I thank my University of New Mexico chat service colleagues whose expertise continues to propel users on their research journeys.

References

Aguilar, Paulita, Kathleen Keating, Suzanne Schadl, and Johann Van Reenen. “Reference as outreach: Meeting users where they are.” Journal of Library Administration 51, no. 4 (2011): 343-358. https://doi.org/10.1080/01930826.2011.556958

Epstein, Michael. “That thing is so annoying: How proactive chat helps us reach more users.” College & Research Libraries News 79, no. 8 (2018): 436. https://doi.org/10.5860/crln.79.8.436

Fan, Suhua Caroline, Rick L. Fought, and Paul C. Gahn. “Adding a Feature: Can a Pop-Up Chat Box Enhance Virtual Reference Services?.” Medical Reference Services Quarterly 36, no. 3 (2017): 220-228. https://doi.org/10.1080/02763869.2017.1332143

Hockey, Julie Michelle. “Transforming library enquiry services: Anywhere, anytime, any device.” Library Management 37, no. 3 (2016): 125-135. https://doi.org/10.1108/LM-04-2016-0021

Imler, Bonnie Brubaker, Kathryn Rebecca Garcia, and Nina Clements. “Are reference pop-up widgets welcome or annoying? A usability study.” Reference Services Review 44, no. 3 (2016): 282-291. https://doi.org/10.1108/RSR-11-2015-0049

Kemp, Jan H., Carolyn L. Ellis, and Krisellen Maloney. “Standing by to help: Transforming online reference with a proactive chat system.” The Journal of Academic Librarianship 41, no. 6 (2015): 764-770. https://doi.org/10.1016/j.acalib.2015.08.018

Kemp, Jan. H. and Marjorie Warner. “Transforming Online Reference with a Proactive Chat System.” Reference and User Services Association webinar: May 19, 2016.

Mu, Xiangming, Alexandra Dimitroff, Jeanette Jordan, and Natalie Burclaff. “A survey and empirical study of virtual reference service in academic libraries.” The Journal of Academic Librarianship 37, no. 2 (2011): 120-129. https://doi.org/10.1016/j.acalib.2011.02.003

Office of Institutional Analytics. “Official Enrollment Reports.” University of New Mexico. Accessed July 31, 2019: http://oia.unm.edu/facts-and-figures/official-enrollment-reports.html

Office of the Vice President for Research. “Welcome from the OVPR.” University of New Mexico. Accessed June 21, 2019: https://research.unm.edu/welcome

Pyburn, Lydia L. “Implementing a Proactive Chat Widget in an Academic Library.” Journal of Library & Information Services in Distance Learning 13, no. 1-2 (2019): 115-128. http://doi.org/10.1080/1533290X.2018.1499245

Rich, Linda, and Vera Lux. “Reaching Additional Users with Proactive Chat.” The Reference Librarian 59, no. 1 (2018): 23-34. https://doi.org/10.1080/02763877.2017.1352556

Schofield, Michael. “Applying the KANO MODEL to Improve UX.” Computers in Libraries 36, no. 8 (2016): 28-32.

Springshare. “Springshare Privacy Policy.” Accessed August 14, 2019: https://springshare.com/privacy.html

Springshare.”GDPR Compliance.” Accessed August 14, 2019: https://www.springshare.com/gdpr.html

Zhang, Jie, and Nevin Mayer. “Proactive chat reference: Getting in the users’ space.” College & Research Libraries News 75, no. 4 (2014): 202-205. https://doi.org/10.5860/crln.75.4.9107

Appendix

Full Description of Figure 2. Top five performing chat widgets since proactive chat implementation.

The five widgets that produced the most chats during the period of May 2017 to May 2019, in descending order are:

- In-database proactive, which had 1511 chats, accounting for 27.4% of all chat interactions

- Ask a Librarian page, which had 1103 chats, accounting for 20.0% of all chat interactions

- EDS Discovery, which had 803 chats, accounting for 14.6% of all chat interactions

- Homepage, which had 795 chats, accounting for 14.4% of all chat interactions

- WMS Discovery, which had 624 chats, accounting for 11.3% of all chat interactions

Full Description of Figure 3. Chat transactions originating from stationary widgets and in-database proactive widget.

- Period A (July 1, 2016 – May 2, 2017)

- 2254 from the stationary widgets

- 0 from the In-database Proactive Chat widget

- 2254 total chat transactions

- Period B (July 1, 2017 – May 2, 2018)

- 1856 from the stationary widgets

- 992 from the In-database Proactive Chat widget

- 2848 total chat transactions

- Period C (July 1, 2018 – May 2, 2019)

- 1677 from the stationary widgets

- 425 from the In-database Proactive Chat widget

- 2102 total chat interactions

Full Description of Figure 4. Chat interactions for the Full Time Equivalent (FTE) student enrollment metric by year.

Chat interactions for the Full Time Equivalent (FTE) student enrollment metric by year:

- 2013 is 0.108

- 2014 is 0.115

- 2015 is 0.121

- 2016 is 0.11

- 2017 is 0.129

- 2018 is 0.119

In looking for recent research on embedding technological apps into platforms found in special libraries, I was delighted to find this article. I will be citing it in a book I’m writing in the chapter on “What Special Librarians Do” as an example of technologies that information professionals in settings such as business, law, and medical libraries will need to explore and, possibly, implement. Your research reinforces what is emphasized time and again in special library services – bringing the service to the client. I love the term “proactive chat.” Thanks for getting this out to the library community.

Thank you for taking the time to comment, Anita! I’m glad you find this article helpful in thinking about your book and some of the ways we try to reach library users.

Thank you for sharing your research. I have our chat widget in EBSCO databases but how did you add the slide out option? Did you use the code for the slide out widget in EBSCO ?

Hi Sandy! Thanks so much for your question. Yes, once we selected the slide-out widget within Springshare, we added the code for it into the EBSCO platform via the EBSCO admin account. Please let me know if I can provide more detail!